Timestamp aware Aberrant Detection and Analysis

in Big Visual Data using Deep Learning Architecture

Science and Engineering Research Board (SERB): SERB/EEQ/2017/000673

Funding Agency: Science and Engineering Research Board, Department of Science and Technology (SERB-DST, 2018)

Principal Investigator: Dr. Santosh Kumar Vipparthi

JRF/Ph.D. Scholar: Kuldeep Marotirao Biradar

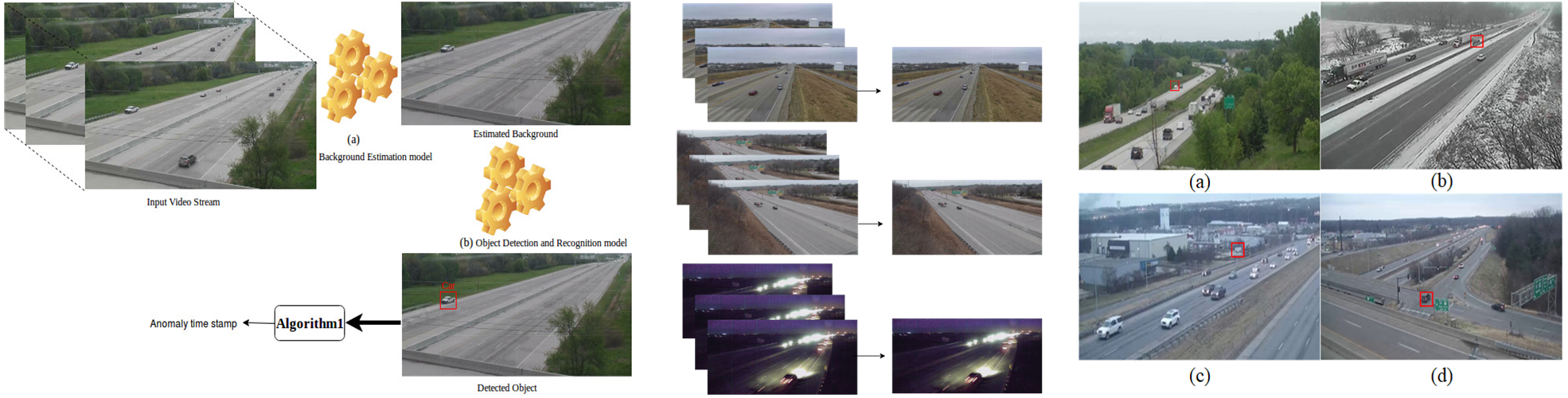

Introduction: The proposed system removes the onus of detecting aberrance situations from the manual operator;

and rather, places it on the video surveillance system.

The present technologies are fails to recognize aberration in video sequences. These aberrances

occur over a small-time window. Thus, recognizing with its timeframe from a big visual data is really

challenging task. Hence, our focus is on problems, where we are given a set of nominal training

videos samples. Based on these samples need to determine whether or not a test video contains an

aberration and what instant it occurs. Similarly, we aim to significantly reduce the time and human

effort by automating the task and improving the accuracy by recognizing aberrances with its

timestamp. Further, exploit the aberrance activity of the object by modeling the rich motion

patterns in selected region, effectively capturing the underlying intrinsic structure they form in the

video. Implementation of this system can be beneficial for intelligent agencies, banks, departmental stores, traffic monitoring on highway,

airport terminal check-in, sports, medical field, and robotics etc.

PROJECT ACTIVITIES AND FINDINGS

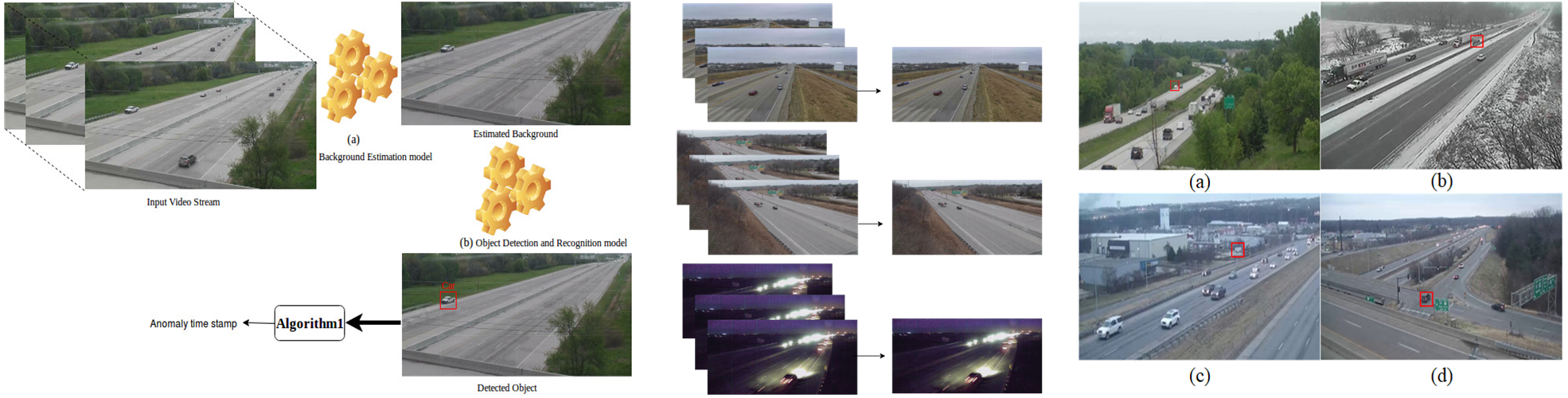

Anomaly Detection in Traffic Videos

New Anamoly Dataset

A custom dataset was generated in a staged/controlled environment. We shot from four strategically placed cameras

simultaneously to capture multiple views of same scene. The videos were recorded at four different locations in different times

of the day. The scenes involve normal data, fight happening in different scenarios, snatching, kidnapping etc.

Scenes were shot indoor/outdoor, in natural light-artificial light, low light as well to cover illumination changes. The videos were recorded

from varied distances to capture subjects with varying size. Post processing yielded usable clips of approx. 90 minutes (90x60x30x4= 648000 frames).

Snippet for the same are depicted in the figure.

PUBLICATIONS

5.

Murari Mandal, Lav Kush Kumar, Mahipal Singh Saran, Santosh Kumar Vipparthi, “MotionRec: A Unified Deep Framework for Moving Object

Recognition,” IEEE Winter Conference on Applications of Computer Vision (WACV), Snowmass Village, Colorado, US, 2020

6.

Monu Verma, Santosh Kumar Vipparthi, Girdhari Singh, Subrahmanyam Murala, “LEARNet:

Dynamic Imaging Network for Micro Expression Recognition,” IEEE Transactions on image

processing, 2019 (Impact Factor 6.79).

10.

Kuldeep Marotirao Biradar, Ayushi Gupta, Murari Mandal, Santosh Kumar Vipparthi,

"Challenges in Time-Stamp Aware Anomaly Detection in Traffic Videos,"

" In CVPR Workshops (CVPRW), Long Beach, California, US, 2019.

12.Monu Verma, Jaspreet Kaur Bhui, Santosh Kumar Vipparthi,

Girdhari Singh, "EXPERTNet: Exigent Features Preservative Network for Facial

Expression Recogntion," ACM 11th International Conference on Computer Vision, Graphics and

Image Processing (ICVGIP), Hyderabad, India, 2018.

15.Monu Verma, Prafulla Saxena, Santosh Kumar Vipparthi, Girdhari Singh, S K Nagar,

“DeFINet: Portable CNN Network for Facial Expression Recognition,”

IEEE International Conference on Information and Communication Technology for Competitive Strategies, 2019

16.

Murari Mandal, Manal Shah, Prashant Meena, Sanhita Devi, Santosh Kumar Vipparthi, “AVDNet:

A Small-Sized Vehicle Detection Network for Aerial Visual Data,” IEEE Geoscience and Remote Sensing Letters, doi: 10.1109/LGRS.2019.2923564 (Impact Factor 3.534)